Infectious keratitis is a prevalent source of vision loss. While data from the 1990s report incidence as 30,000 cases per year in the United States, a newer study suggests this number has more than doubled.1,2 A small but significant percentage of these eyes go on to require corneal transplantation; between 2% and 6% will require an urgent transplant and an even higher percentage will require surgery to ameliorate resultant scars.3,4 A smaller number, perhaps as high 1.8% of ulcers seen at academic centers, go on to require enucleation or evisceration.5

Because of the potential for severe vision loss, microbial ulcer management requires critical thinking at nearly all junctures, as well as careful and thoughtful follow-up. Based on the severity of infection upon diagnosis, the degree of virulence of the particular microbe and patient-specific features, corneal infections can sometimes progress despite aggressive and appropriate intervention. The good news, however, is that when these infections are identified and treated early, the odds of a favorable outcome are much greater.

|

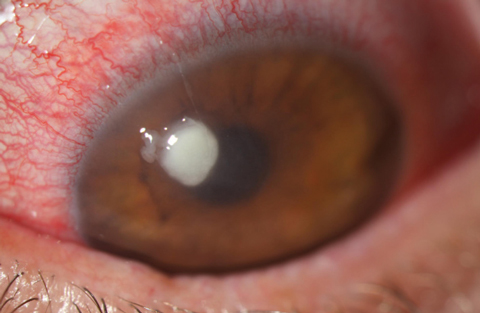

| Fig. 1. Here is a look at a typical Staphylococcus ulcer. Note its uniform density and well-defined borders. This organism was resistant to fluoroquinolones but responded well to dual fortified agents. |

When to Culture

The first and most critical step in the management of microbial keratitis is determining whether the infiltrate is infectious. Some key features in making this distinction are a supportive risk factor (e.g., contact lens wear), a dense, round or oval infiltrate with ulceration (meaning there is an epithelial defect), overall infiltrate size larger than a pinhead and, with increasing suspicion, chances are heightened the more central the lesion is located.6 Prior to the initiation of any therapy, practitioners should decide whether to culture in house, refer for culturing or pursue a purely empiric treatment.

For full disclosure, I culture every ulcer that I believe is infectious. I find this to be appropriate for several reasons. First, culturing takes practice, and even in the hands of an experienced clinician, it only yields results between 38% and 72% of the time.7,8 In this regard, practice makes perfect, or at least better. Of course, you don’t practice on a patient just to enhance an unnecessary skill, which brings me to my next point: Although culturing removes only a tiny amount of material, removal of infectious material should be a positive thing, at least from a therapeutic standpoint—though this remains unproven. Finally, culturing adds both clinical and medical-legal heft to your treatment decisions. While inappropriate treatment does not dramatically reduce culture yield, you only get one “best chance,” and that is prior to initiating treatment.8-10

Even ineffective therapy reduces culture yield, and in these eyes the future—like the infected cornea—is opaque. You can’t look at a series of ulcers and predict with good accuracy which ones will not respond to therapy. Having the culture results in hand if things deteriorate can prove invaluable. Ignoring my own clinical practice patterns, not all presumed infectious ulcers in community clinics need culturing. In fact, most ulcers respond well to empiric commercially available therapy. However, ulcers that are larger than 2mm, central or paracentral in location, have an unusual appearance or characteristics and an unusual history such as trauma should be cultured or referred for culturing. Also, if you do intend to refer for culturing within 24 hours, you are better off not initiating antibiotic therapy.

|

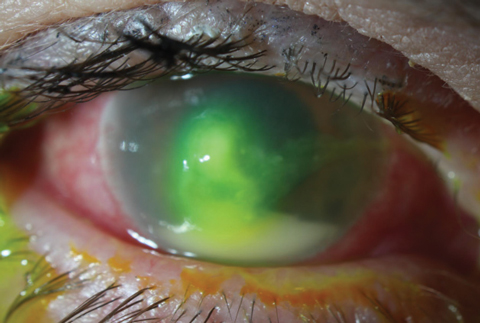

| Fig. 2. In contrast to the Staphylococcal ulcer in Figure 1, this mid-stage Pseudomonas aeruginosa ulcer is more mucousy in appearance and slightly whirled. The large hypopyon is not necessarily suggestive of etiology, but more a sign of the inflammatory response’s intensity. |

Clinical Differentiation

Regardless of whether or not you culture, nearly all initial treatment begins empirically, as culture results take one to seven days to process.11 Establishing effective initial treatment is perhaps the most critical step in setting the stage for a positive outcome. Research shows that patients who fail on incorrect initial empiric therapy have a markedly higher rate of keratoplasty and a significantly greater cost of therapy, so planning should be approached with careful consideration.7 The managing physician should assess the most likely source of infection based on history, clinical presentation and susceptibility of pathogens. The first consideration here is the likely infectious etiology. Certainly no one would prescribe antibiotics for a fungal infection, so some level of differentiation is necessary.

Beyond general differentiation, distinguishing between the varied bacterial sources of infection is also a key part of managing corneal ulcers. First, you need to consider the influence your geographic location plays. Almost all etiologic reviews of microbial keratitis agree there is tremendous variability in causative pathogens based on location. A study out of Paraguay showed a 79% incidence of Staphylococcus etiology, while another from Australia had only a 17% incidence.12 This trend is mirrored regionally in different zones in the United States. Regardless of history such as contact lens use, Pseudomonas aeruginosa contributes to a relatively higher percentage of cases of corneal ulceration in the southeast United States than in more temperate or desert climates.2,10,13 An increased incidence of fungal ulcers exists in tropical climates, and in the southeast United States, this results in rates of fungal keratitis that double or triple those seen than more temperate zones.14-16 Geographic differences don’t play a role in the clinical appearance of the ulcers, but those practicing in Florida, for example, need to have a higher index of suspicion for fungal ulcers than someone in the southwest United States.

Next in the process of differentiating ulcers is attending to specific risk factors. First of all, age plays a role in which form to suspect. Gram-negative ulcers affect young patients much more frequently than those over age 60, where gram-positive etiologies predominate.16 It’s also been well established that gram-negative species, particularly Pseudomonas, have a higher association with contact lens use, whereas postoperative corneal infections and those associated with ocular surface disease are most typically caused by Staph. species.10,16-19 Beyond the widely understood link to fungal infections, trauma also carries the risk for atypical bacterial infections such as nontuberculous Mycobacterium and Nocardia.18

Pathogens that heavily contribute to the normal flora will infect the eye in an opportunistic fashion, so in compromised corneas, Staph. species, as dominant organisms of the normal flora, would predominate. Pseudomonas is a minor part of the ocular flora, so it requires a delivery vehicle, in this case a contact lens, to be inoculated onto the eye. Fungal species and atypical bacteria are usually absent from the eye and also require some inoculating media, most often in the form of environmental or organic trauma. As a result, ulcers with histories such as organic trauma almost always require initial culturing regardless of clinical appearance. Fungal and atypical ulcers have a dramatically worse prognosis than the more typical etiologies.8,10

Despite this, history doesn’t guarantee presence of any etiology, and a large number of contact lens-related ulcers will be the result of Staph. species.17,20 Risk factors evolve over time and while trauma once was the primary risk factor for fungal keratitis in the United States, contact lens use now probably outstrips it as the top association. Because of this, the final clue guiding clinical suspicion should be the ulcer’s appearance.21-23

The ulcer’s clinical appearance must be mined for hints as to what organism needs combating. Staph. species typically have a dry, discrete infiltrate. These may be paired with hypopyon and have surrounding edema. Pseudomonas ulcers, in contrast, are much wetter and mucousy in appearance and look like they could be debrided relatively easily. These ulcers may also have a somewhat whirled, non-uniform density, whereas Staph. ulcers tend to be more uniform throughout.

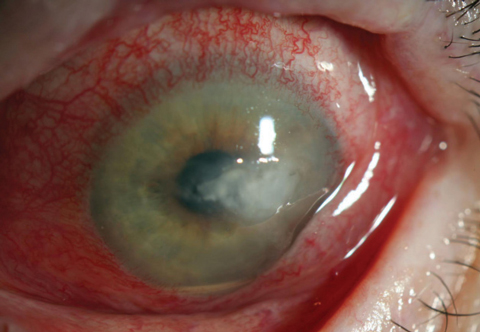

Fungal ulcers can give a varied clinical appearance. A significant percentage of fungal infiltrates may display characteristic features such as feathery margins or satellite infiltrates; over half will have non-specific features, leading to misdiagnosis of the vast majority of fungal ulcers by as much as 87% in one review.20 Knowing this, fungal infection has to remain on the differential for essentially all corneal ulcers until a positive response to antibiotics occurs.

|

| Fig. 3. Note the lack of any defining classic fungal ulceration features In this Candida ulcer. There was no history of trauma or injury in this case, suggesting a fungal source. Unfortunately, sometimes which cases should be cultured is only apparent in hindsight. |

Treatment

Once a clinical differential has been made, focus should shift to therapy. It’s becoming more important to use critical thinking to differentiate not only among fungal bacterial and amoebic ulcers, but also among likely etiologies of bacterial microbes when selecting initial therapy.

Twenty years ago, we were practicing in an era of relatively effective monotherapy. Fluoroquinolones were widely effective against most ocular pathogens, and as the class has evolved, their coverage improved.24 This was the norm among community practices. Empiric treatment with a single fluoroquinolone was successful approximately 90% of the time—even in eyes with demonstrated in vitro resistance.25 Currently, however, as antibiotic resistance to fluoroquinolones continues to expand among gram-positive pathogens, this treatment strategy can be expected to fail more frequently.

Although widespread resistance to fluoroquinolones has essentially been limited to Staph. species, and causative etiologies vary wildly depending on geographic location, we can use published data to get a general idea of how frequently the ulcers we run into will be resistant to fluoroquinolones. In the United States, between 10% and 25% of all corneal ulcers should be expected to be resistant to gatifloxacin and moxifloxacin.1-11,13,14,24,26 If the majority of practitioners who manage corneal ulcers are using a single fourth-generation fluoroquinolone, we have to expect this treatment to fail at levels nearing 25%. Considering the fact that inappropriate initial therapy leads patients to greatly increased risk of needing keratoplasty and a much higher cost of therapy, this is a cause for concern.

Some will wonder about besifloxacin for several reasons. First, it has no systemic equivalent, meaning it should in theory reduce resistance from systemic dosing. The chlorofloroquinolone has a somewhat different molecular structure than gatifloxacin and moxifloxin, theoretically meaning it should reduce the cross-resistance common in fluorquinolones.14 Additionally, the ARMOR study found the agent to have nearly as potent a minimum inhibitory concentration (MIC) against methicillin-resistant Staphylococcus aureus (MRSA) as vancomycin.27

Unfortunately, for those of us in community clinics, there is no way to prove any of this information is still valid. The difficulty with besifloxacin starts with it being an ophthalmic-only preparation. Because of this, it is not part of standard MIC testing done with culture and sensitivity testing (a systemically derived test), and most community microbiology labs are unable to provide the same information for it. Therefore, besifloxacin has to be used with blind trust assuming it will work. In our clinic at least, besifloxacin, which is still our go-to commercially available agent, has not performed any better than moxifloxacin or gatifloxacin when faced with fourth-generation fluoroquinolone resistant bugs (as determined by MIC testing).

Still, fluoroquinolones perform very well against nearly all common non-Staph. bacterial pathogens and are reasonable forms of monotherapy in most cases. This is especially true for the most common non-Staph. cause of bacterial keratitis, Pseudomonas, which has extremely low rates of resistance (although it is actually more susceptible to older generation agents). 6,10-15,24,26-29 Considering the overall rate of resistance to fourth-generation fluoroquinolones driven by expanding MRSA and methicillin-resistant Staphylococcus epidermidis (MRSE) species, and good coverage of fluoroquinolones against most other ophthalmic pathogens, it’s fair to say we are in a bit of a bind. If you are clinically unable to differentiate between “likely” Staph. ulcers and “likely” non-Staph. ulcers, monotherapy with a fourth-generation fluoroquinolone runs a relatively high risk of encountering resistance and failing initially, therefore increasing the risk of a poor outcome.

Because of all this, I don’t believe monotherapy is currently the best practice for management of corneal ulcers. Instead, treating all presumed bacterial but undifferentiated ulcers with commercially available dual therapy would be wise. Dual-agent treatment may seem unwieldy or undesirable, but this shouldn’t be the case. We simply need to look to the example of how cornea clinics have managed ulcers all along—with dual broad-spectrum fortified agents—to realize that commercially available dual therapy redirects our practice patterns back towards what is done at the highest levels.

When selecting agents, I still believe that a fourth-generation fluoroquinolone is the most reasonable starting point for therapy. This could be paired with one of the older commercially available agents that performed well against MRSA and MRSE isolates in the Ocular TRUST 2 study: Polytrim (trimethoprim/polymyxin B sulfate, Allergan) solution, tobramycin solution 0.3%, gentamicin solution 0.3% or bacitracin ophthalmic ointment. With this combination, practitioners should encounter less treatment failures due to resistance. As is the case with any corneal ulcer, initial dosing should be aggressive. With dual agents, I apply the loading dose over 15 minutes and send the patient off with instructions to use both medications every hour—one drop on the hour and the other on the half hour—throughout the day. I also recommend that the patient wake up every two to three hours throughout the night to administer the drops until improvement is noted.

From here, close follow-up is necessary to ensure treatment success, and any unexpected change for the worse should be met with an immediate response. Culturing or re-culturing, empirically changing treatment or referring the patient to a cornea clinic are all appropriate steps, and the only plainly inappropriate step would be to continue watching the eye deteriorate. In addition to failure with original treatment, delay in referral to a cornea clinic is the other significant risk factor leading to keratoplasty with these eyes, so when initial treatment fails, it’s imperative to change course.30 By taking a watchful, conservative approach, using critical thinking to form initial diagnosis and treatment decisions and responding decisively and aggressively to any change for the worse, you are giving your patient the best chance at achieving a positive outcome.

Dr. Bronner is an attending optometrist at Pacific Cataract and Laser Institute in Kennewick, Wash.

1. Pepose JS, Wilhelmus KR. Divergent approaches to the management of corneal ulcers. American Ophthalmol. 1992;114(5):630-2. |